What is design thinking? Simply put, design thinking is a way to solve complex problems in a human-centered way. It starts with a specific goal and goes through multiple iterative stages of diverging and converging. Design thinking typically includes approaches like observation, interviews, brainstorming and prototyping. Tim Brown, CEO and president of IDEO, gives this definition of design thinking’s role within business: “Design thinking can be described as a discipline that uses the designer’s sensibility and methods to match people’s needs with what is technologically feasible and what a viable business strategy can convert into customer value and market opportunity.” What consumer research innovation challenges can design thinking help solve?

How can researchers apply design thinking to innovation-focused research?1. Observation and interviews. These approaches help build gut-level consumer understanding to allow the team to design solutions that will delight consumers, without asking consumers to predict the future.

2. Brainstorming, co-creation and prototyping. These approaches help avoid the trap of waiting for a final or “perfect” product before engaging consumers as well as helping consumers react to something that does not yet exist.

3. Problem definition and iteration. These approaches can help to both design the most effective consumer research up front and give the flexibility to learn over time, without needing to have everything figured out before ever talking with a consumer.

Building solutions Consumer research for innovation brings certain challenges, but through the application of key design thinking principles, researchers can approach the learning process differently to not only help overcome these difficulties, but actually build stronger, more compelling solutions for the consumers they serve.

0 Comments

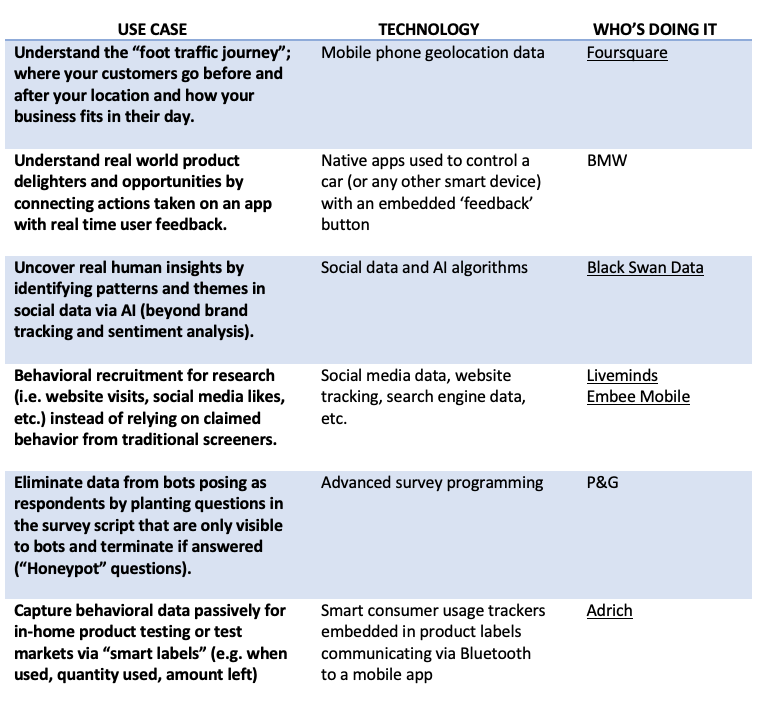

As a consumer researcher, I’m fascinated by the growing application of machine learning and automation to our industry, but a lot of it still seems very theoretical for the typical research generalist. I had the opportunity to attend the NA MRMW (Market Research in a Mobile World) Conference this month and was excited by all the examples that people shared of how they are applying AI and automation in their businesses today.

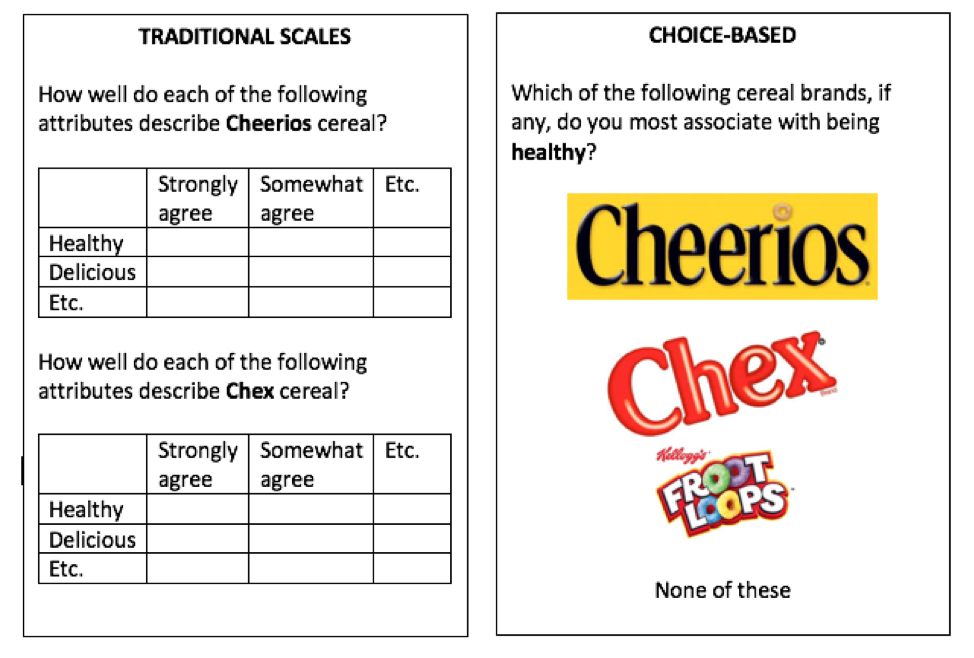

Below, I’ve captured just a few highlights of some tangible examples that inspired me and my non-technical take on the technology involved, as well as who shared the use case or capability at the conference. If this piques your interest, I encourage you to dig further into some of the companies below, attend a future MRMW conference, and look for application opportunities in your own business.  An overriding theme in market research innovation is getting closer to the way people actually think, feel, and behave. We’re seeing more techniques and approaches that allow us to observe and measure versus ask. The body of evidence for this shift is extremely compelling, especially when combined with the latest cognitive and behavioral science advances. One way to do this within a quantitative research context, is to focus on respondent choice as a measure, versus traditional scale-based responses. At a recent market research industry conference, Dave Santee of True North Market Insights gave three compelling arguments against traditional Likert scales, saying: they are not comparative or in context, respondents dislike them and don’t make decisions that way, and different groups/cultures use scales differently. Multiple expert sources agree that research approaches that ask respondents to make a selection from a relevant choice set are more predictive than research designs that ask respondents to rate or score those same options on a scale. We know that our brains love shortcuts—we operate in deselection mode when shopping, not rating each and every product before arriving at a decision. By the way, this approach also gets around the dreaded matrix question! Here is a simple example that works within a traditional survey framework (truncated just to make the point): There are trade-offs. If you’re used to getting a rating for every attribute for every brand, that won’t happen in this type of research design. But if the findings more accurately reflect the way that people feel and act, that seems like a good trade-off to make. The benefits will go to those who can convince their organization to throw away the old scorecards, dashboards, and databases. As an ex-corporate researcher, I understand that’s no easy feat!

In addition to restructuring questions for choice (versus scales) within a traditional survey framework, there are other, entirely choice-centric research methodologies, including MaxDiff, Conjoint, Prediction Markets, and Virtual Shelf/Store research. These rely exclusively on respondent choice for data collection (but can also be supplemented by direct questions within the broader study). Virtual shelf tests are something I’ve personally been doing a lot more of recently. They are my preferred way of doing packaging testing, but I’ve also used them for planogram/shelf set design and even new product qualification (in lieu of a traditional concept test). Instead of asking purchase intent or other scale-response questions, respondents simply shop for their desired product(s) from a category shelf set, generating product/package selection and spending metrics. This type of testing doesn’t have to be restricted to “shelves” if that’s not relevant for your category or brand. The key principle is to put the product (or service) in the context that consumers will actually evaluate and make the purchase decision. Other examples might include: mocked-up Amazon.com pages of products, restaurant menus, or website lists of services (don’t forget to include prices!). - I have very classical CPG market research background, so I cut my teeth on survey scales and grids and live for the thrill of a top quintile purchase intent score, but I also love learning about new methodologies and techniques that get us closer to predicting actual consumer behavior in a complex reality. Choice-based research techniques can not only help us better reflect and predict reality, but they also have the benefits of being more engaging for respondents and more mobile-friendly, both of which lead to higher survey completion rates, more representative sample, and ultimately, better quality and more accurate data. |

AuthorSarah Faulkner, Owner Faulkner Insights Archives

July 2021

Categories

All

|

RSS Feed

RSS Feed